Video Guidance System for Automated Drone Flight

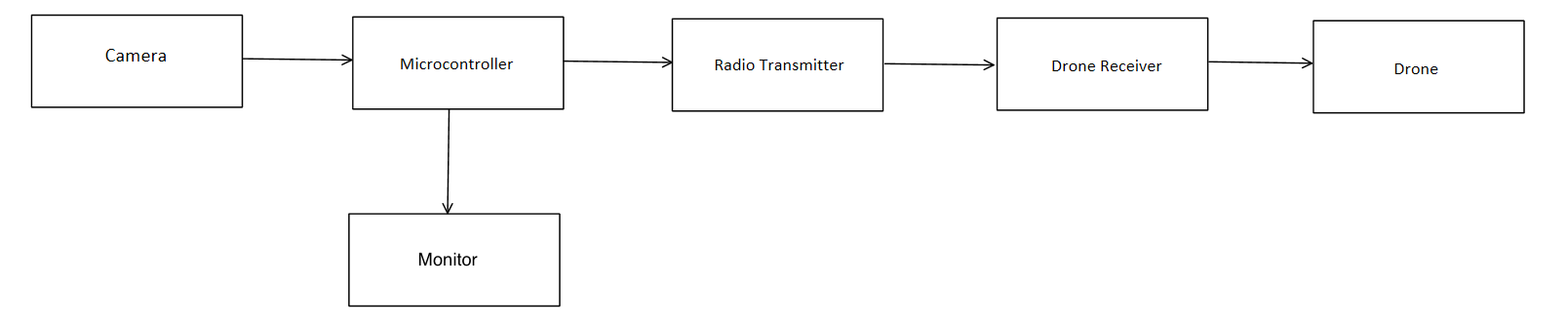

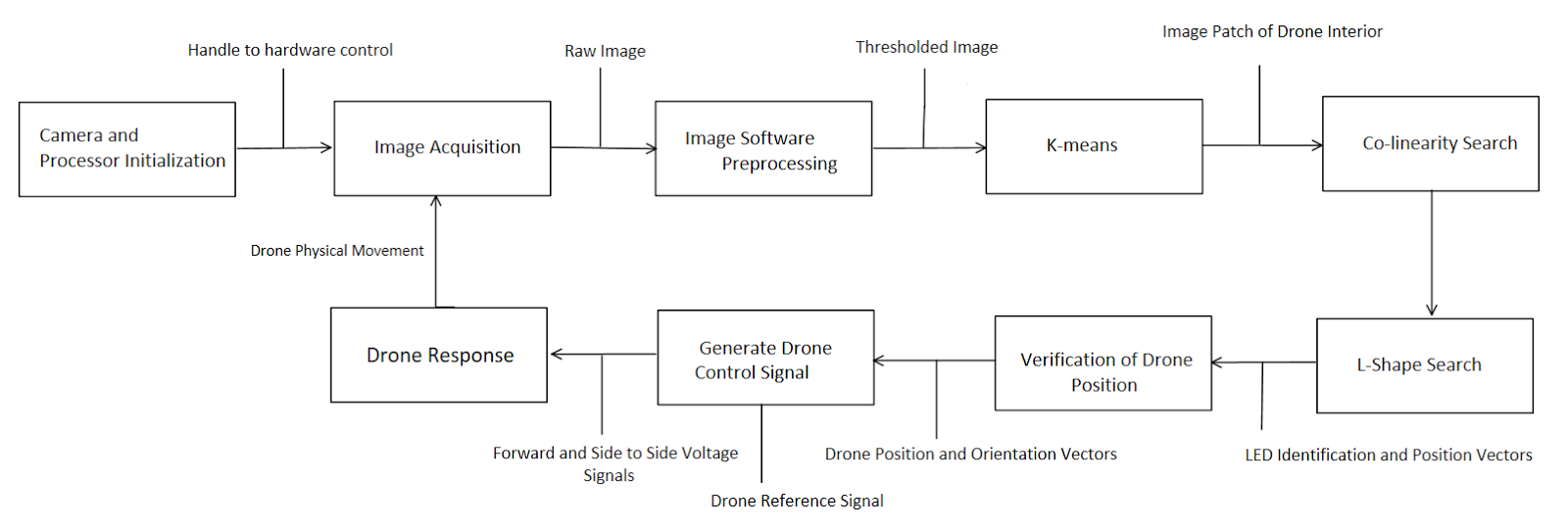

Landing a drone accurately is the hardest part about operating it, especially if the operator is far from the landing zone. The goal behind this project is to automate the process of landing. The system is designed to “talk” to the drone to try to center it with the right orientation and then proceed to send the control signal for the landing subroutine.

Hardware:

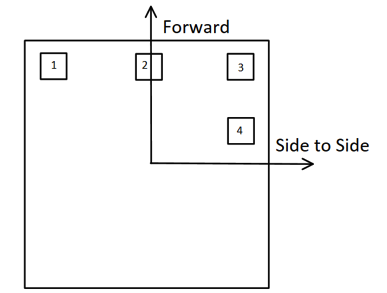

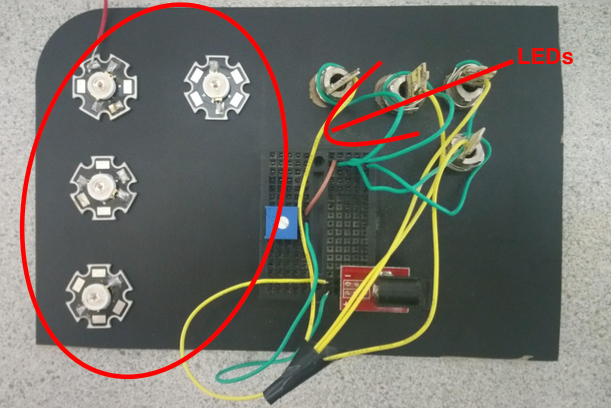

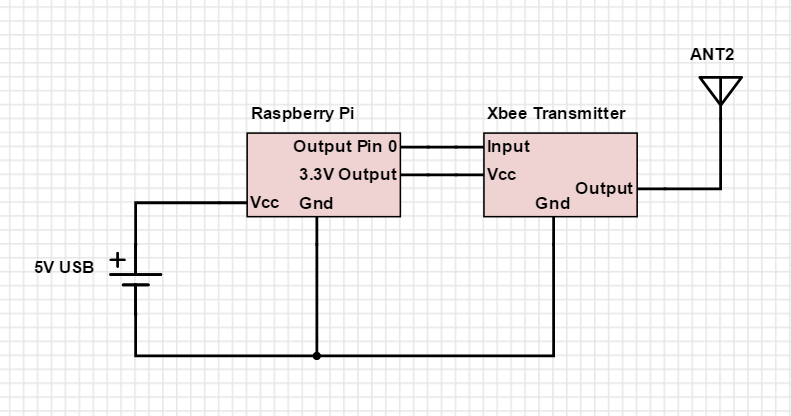

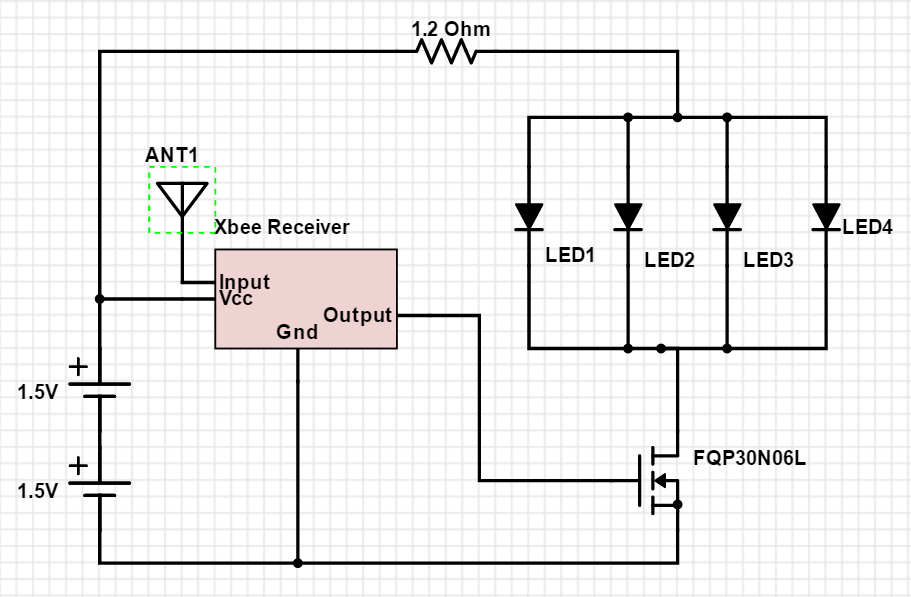

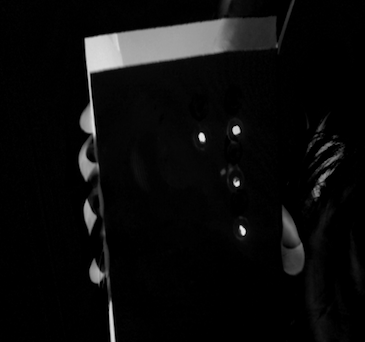

The hardware was comprised of a portable processing unit, in this case we used a Raspberry Pi, and an image sensor, which was a simple Raspberry Pi camera. To help identify the drone an LEDs attachment is attached to the bottom of the drone. The LEDs were configured in non-symmetrical way for direction recognition (to know which way the drone is pointing). The communication between the drone attachment and Raspberry Pi was established using Xbees.

Software:

The Software that was developed was a mixture of image processing (filtering, thresholding, convolution) and machine learning (k-means).

Image Preprocessing:

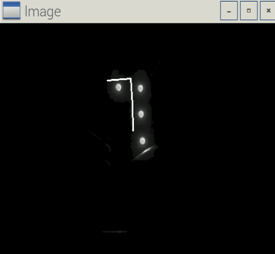

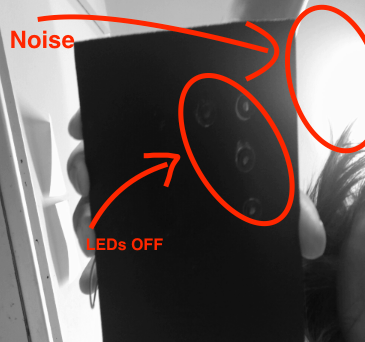

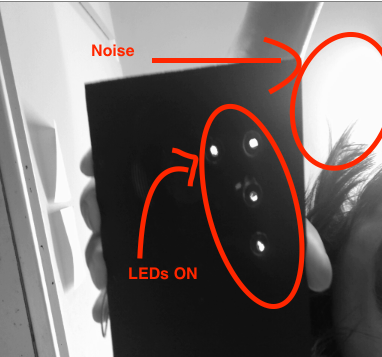

The first thing the algorithm does is to filter the static noise (i.e. sun, or a source of light) that could be mistaken by the software as an LED. To filter out the noise the algorithm takes two successive images, one with the LEDs ON, and another with the LEDs OFF, then perform image subtraction so that we are only left with the LEDs. To achieve this, the LEDs has to be flashed at the same frequency as the camera's frequency of taking pictures

To accentuate the LEDs and minimize the surrounding, a Template-matching with a Gaussian filter is performed on the image.

K-Means:

The K-means algorithm is used to cluster the bright pixels (i.e. LEDs) together so that the processing afterwards will have less pixels to work with, thus making it less computationally intensive. The basic idea behind K-means clustering is grouping points that are closer to a given centroids (randomly generated points), how close the points are to a certain centroid depends on their Euclidean distance to that centroid compared to other centroids.

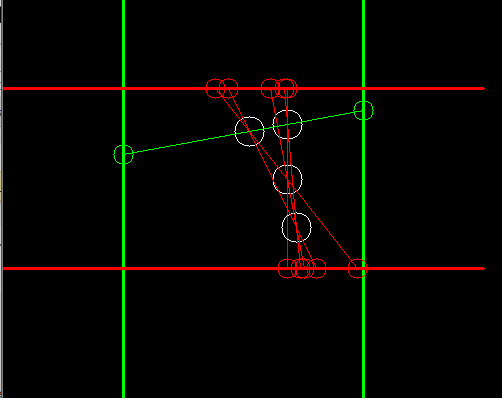

Collinearity and L-shape search:

Collinearity and L-shape search are the recognition phase of the algorithm. The configuration of the LEDs was chosen for easy identification of drone position and orientation. After clustering the bright pixels using the K-means algorithm, the system begins to search for the points (i.e bright spots) that are collinear (i.e. in a straight line). Then begins to search for the L-shape that distinguishes the drone position from any possible noise. After finding the collinear points, the algorithm loops through every triplet and searches for a centroid at right angles to the line of collinearity. After locating the L-shape that the LEDs form, the algorithm draws an 'L' and superimpose it on the actual shape.